How To Do Effective Project Estimating

A step-by-step process and model-driven approach for creating a project estimate: imagining a report development project for a product-based company.

2 min read

Vishvesh Kaushik : May 3, 2023

Machine learning teams are like pirates. They like solving challenging ML problems with esoteric tools. On the other hand, Enterprises are like the navy, which needs processes, repeatability and security.

ML development tools like notebooks can be good for development. However, more needs to be done to keep models performing at enterprise grade. This involves integrating machine learning models into existing enterprise systems, ensuring interoperability, scalability, and security. It also involves implementing robust monitoring and alerting systems to detect and diagnose issues timely and using automated testing and deployment pipelines to ensure consistency and repeatability in the development process.

Without that support, the models will lose performance overtime. This is called as Model Decay.

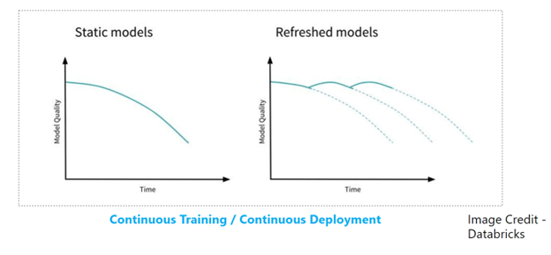

Two main reasons for models losing their performance and usability are Data Drift and Concept Drift.

Data Drift: Data drift refers to changes in the input data distribution over time, which can lead to reduced model performance. Monitoring data drift involves comparing the current data distribution to the data distribution used to train the model and detecting significant differences.

Concept Drift: Concept drift refers to changes in the underlying relationships between the input data and the output predictions over time. Monitoring concept drift involves detecting changes in the model's accuracy or performance on specific subsets of the data.

Continuous training and continuous deployment is needed to keep the usability of models.

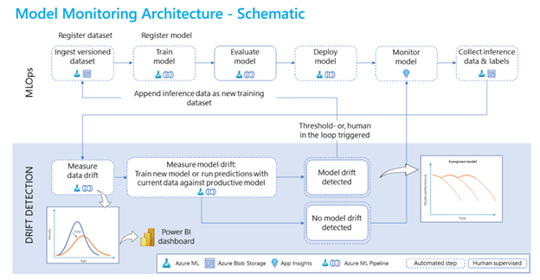

We can depict an enterprise grade ML pipeline in schematic as follows:

Azure Machine Learning Platforms along with Azure Devops provides a robust toolset that supports the otherwise arduous process of keeping the data models performant.

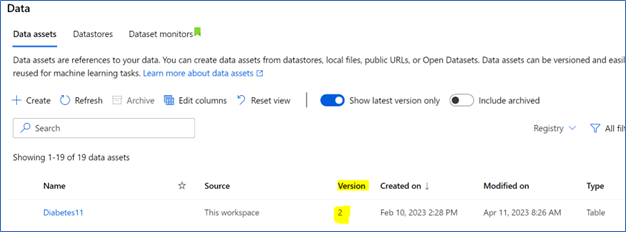

Registered Data Sets

Azure ML registered data sets help keep track of data that is used in experiments.

ML models need to be retrained periodically. Azure ML registered data sets keep the data in one place and make it easy to track data versions.

Registered Model

Many models may need to be trained and evaluated before an optimal model is found for the problem. Model registry makes it easy to keep track of the candidate models, that can be promoted to production.

When the model is changed due to retraining or a code change, a new version of the model is registered. Azure makes it easy to keep track of the models.

Azure ML registries allow sharing the model across workspaces and subscriptions.

Model Evaluation

Models can be evaluated with test data. We compare the model output with true labels to see if the model is fit for deployment.

Model Deployment

Azure ML provides batch end points and real time end points to which the model can be deployed. We should prefer the batch end points unless there is a business need to do real time predictions.

It is also possible to deploy to AKS end points. There is more overhead to configuring and managing the AKS clusters. The Azure provided end points are a managed service, and those are easier to deploy and manage.

If the workloads are higher than approximately 400 requests per second, then AKS endpoints will give a better performance.

Model Monitoring

Once the model is deployed, it is important to monitor its performance with test data. We can compare the results given by the model to the true labels periodically. Depending on the business case, weekly to monthly cadence should be good to monitor the model.

If performance degradation is observed, then retraining the model with new data and in some cases making code changes to the model may be required.

Many ML teams can develop models, however, they struggle to take the models to production. Azure ML provides robust tool set to support an enterprise grade ML model pipelines. With knowledge of the tools available within Azure ML teams can meet enterprise security standards, achieve automation, and keep the models performant.

If you would like to discuss this topic further or have any questions, please don't hesitate to contact us today.

A step-by-step process and model-driven approach for creating a project estimate: imagining a report development project for a product-based company.

.png)

We found a gap in Microsoft documentation, for disabling disk encryption on VMs, and this blog may help someone save time.

.png)

The Scenario For many of you that have been working in Azure over the years, you have likely had the need or desire to secure access to your Azure...